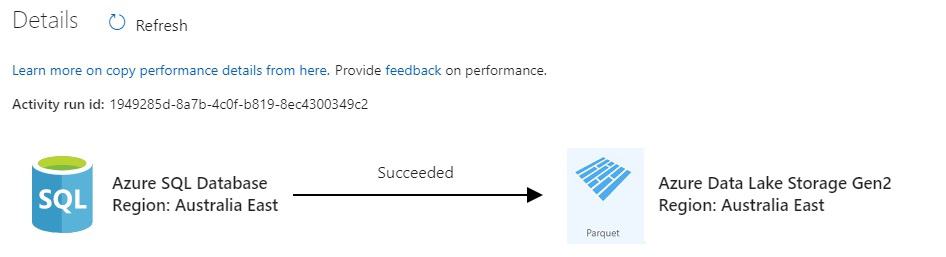

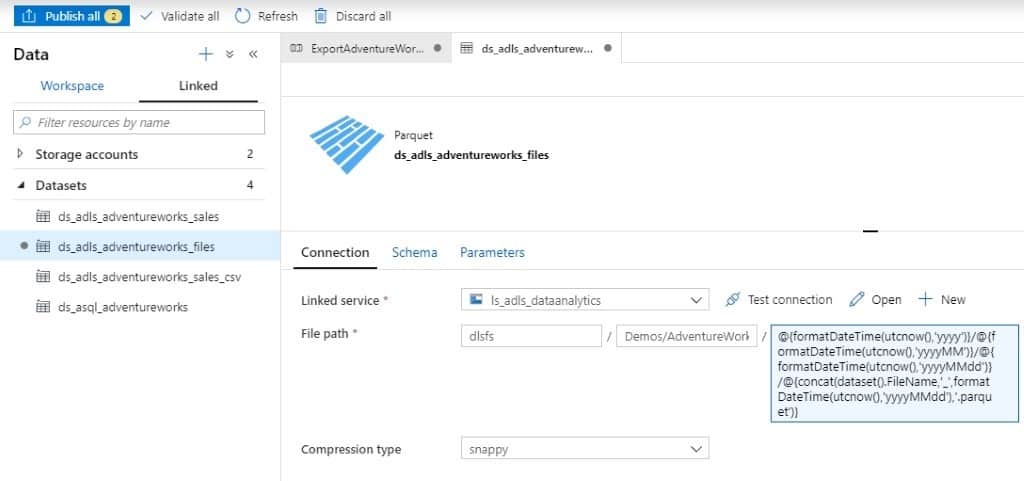

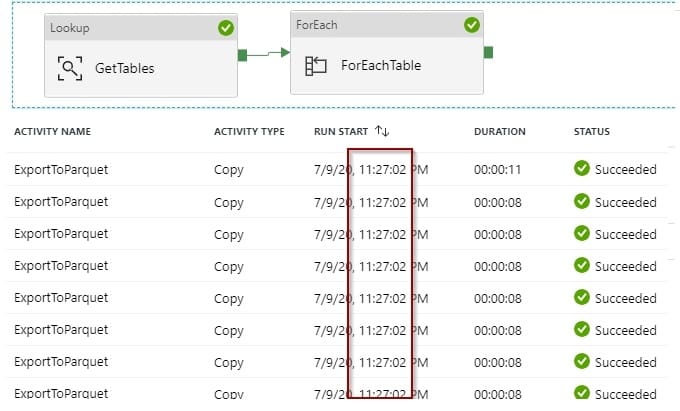

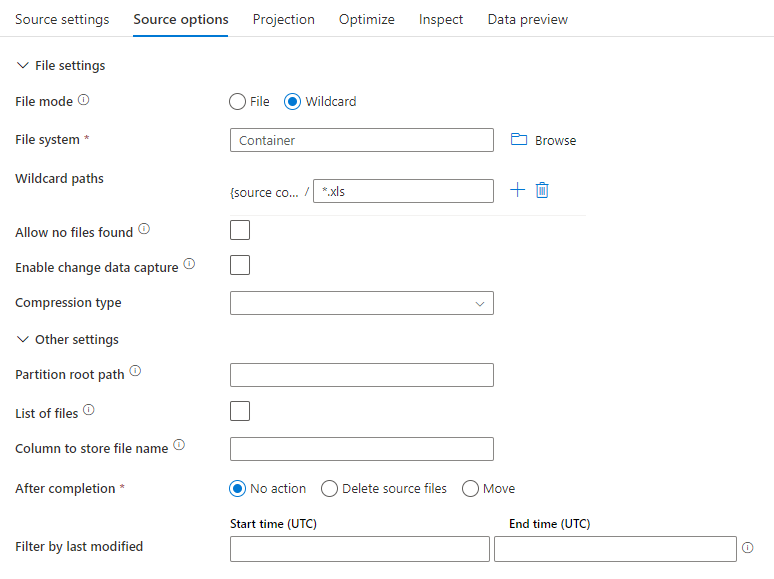

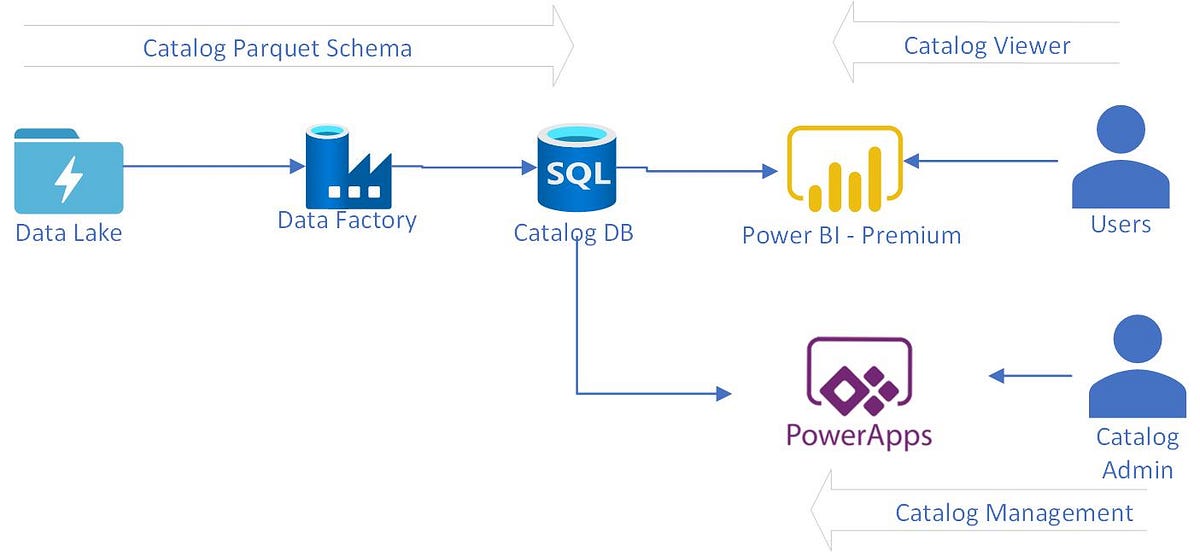

Custom Data Catalog Parquet File using Azure Data Factory | by Balamurugan Balakreshnan | Analytics Vidhya | Medium

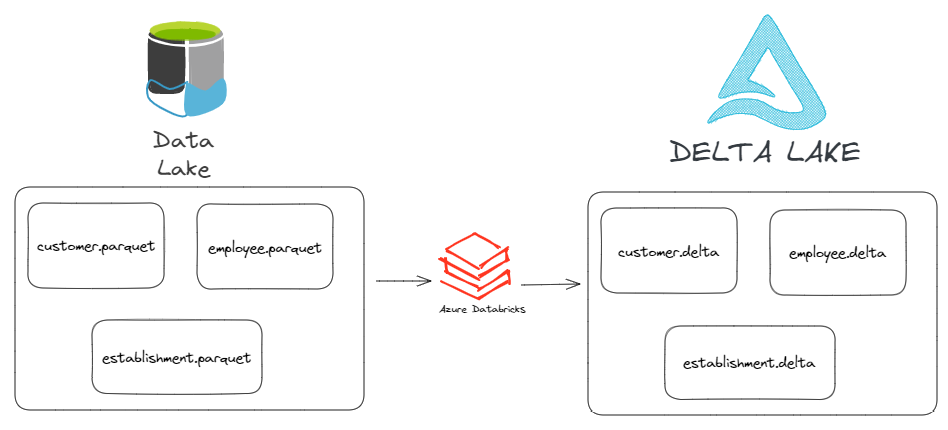

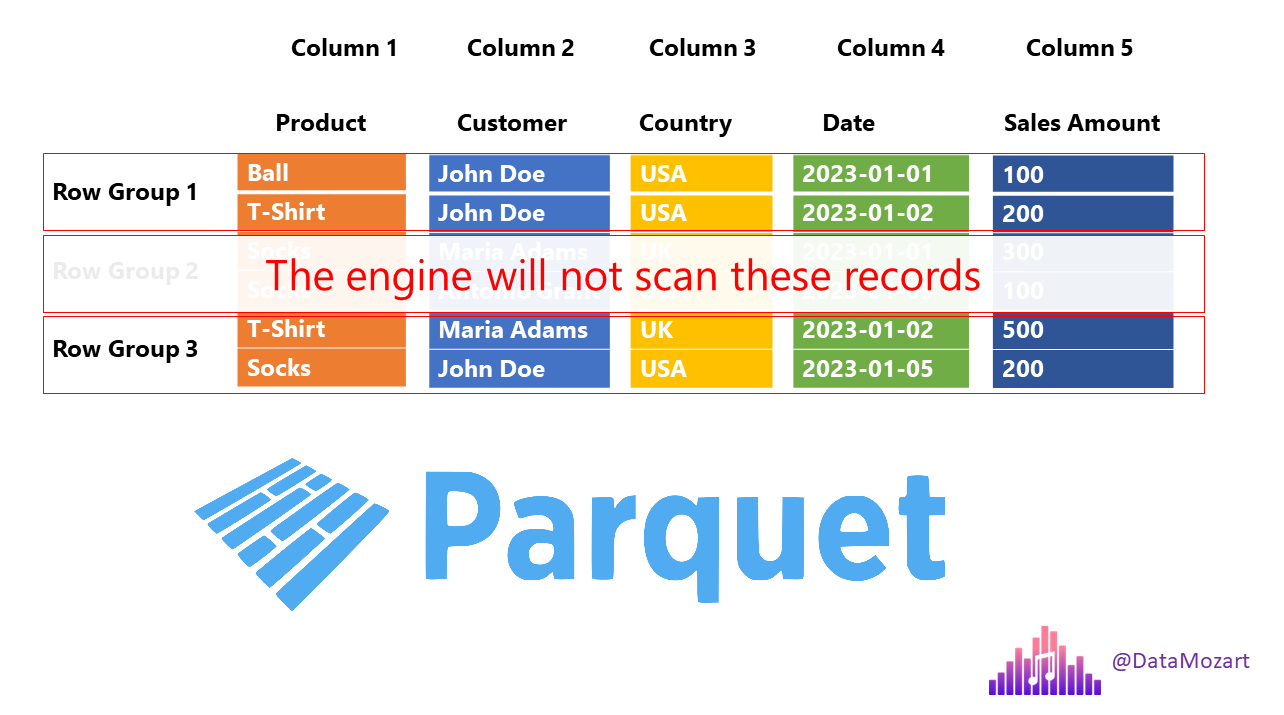

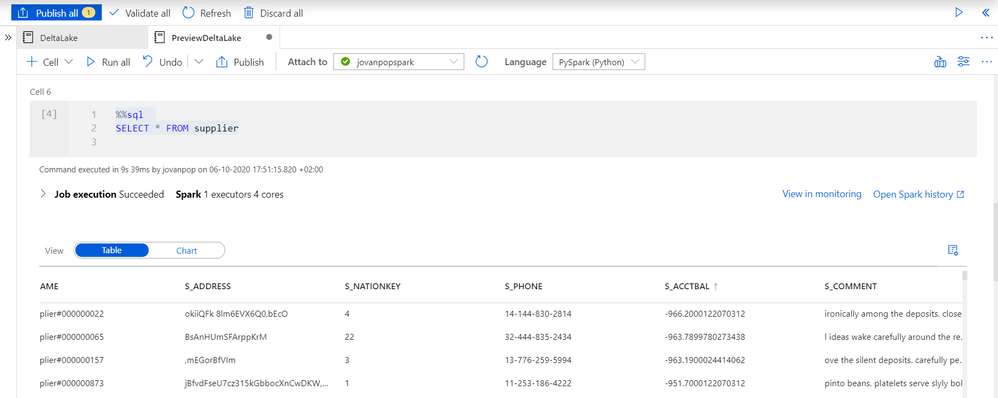

Convert plain parquet files to Delta Lake format using Apache Spark in Azure Synapse Analytics - Microsoft Community Hub

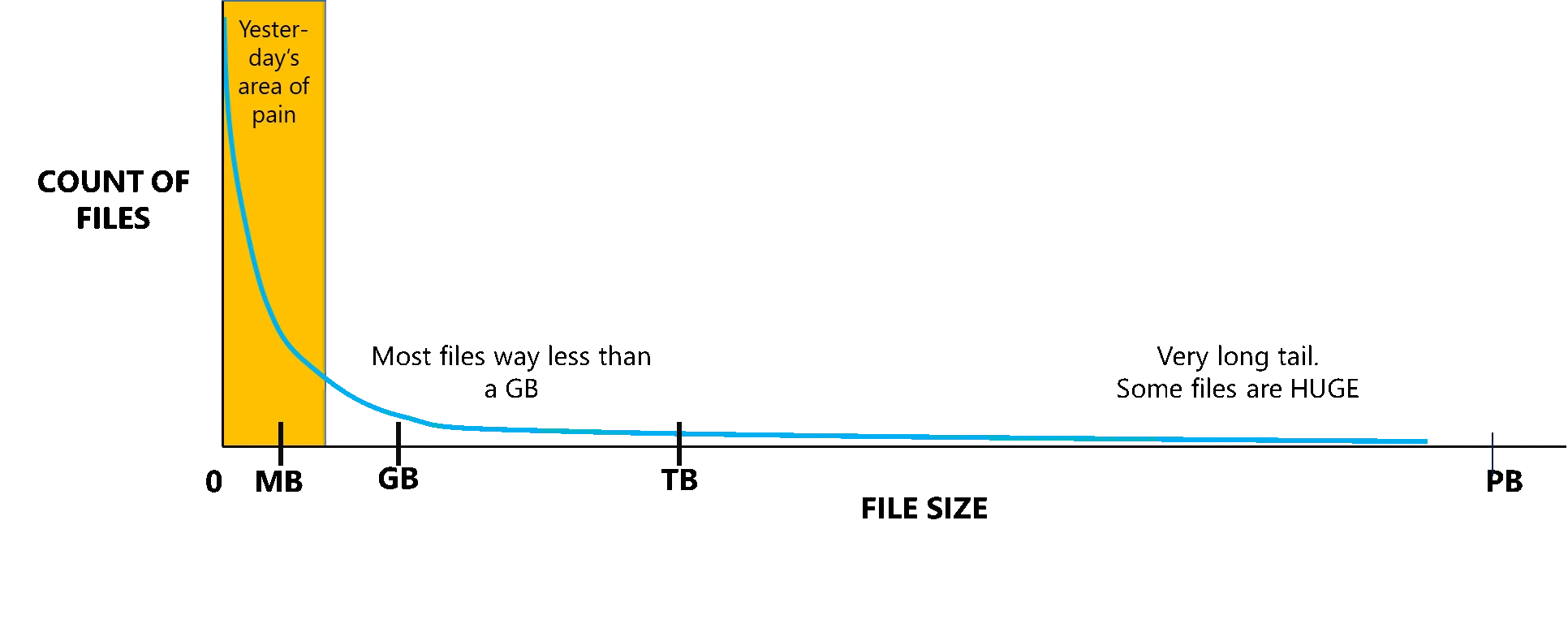

Process more files than ever and use Parquet with Azure Data Lake Analytics | Azure Blog | Microsoft Azure

When we use Azure data lake store as data source for Azure Analysis services, is Parquet file formats are supported? - Stack Overflow

Convert plain parquet files to Delta Lake format using Apache Spark in Azure Synapse Analytics - Microsoft Community Hub